The Rise Of Enterprise DevOps – In Conversation With Alan King

The worldwide DevOps platform market is growing, and growing rapidly. Between 2016 and 2020, this market will expand by a remarkable ~19.4%. By the first half of 2017, 1 out of every 10 software developers across the globe had already reported full adoption of DevOps – while a further 63% were actively involved with DevOps models/practices in some way or the other. In fact, only a measly 6% companies had not heard about DevOps – clearly underlining how wide the awareness levels about DevOps practices have grown. Alan King, the CEO of Dusk Mobile – a leading Australia-based field service and asset management company – here speaks about the nature of DevOps, how they are being adopted, related technologies, and a whole lot more.

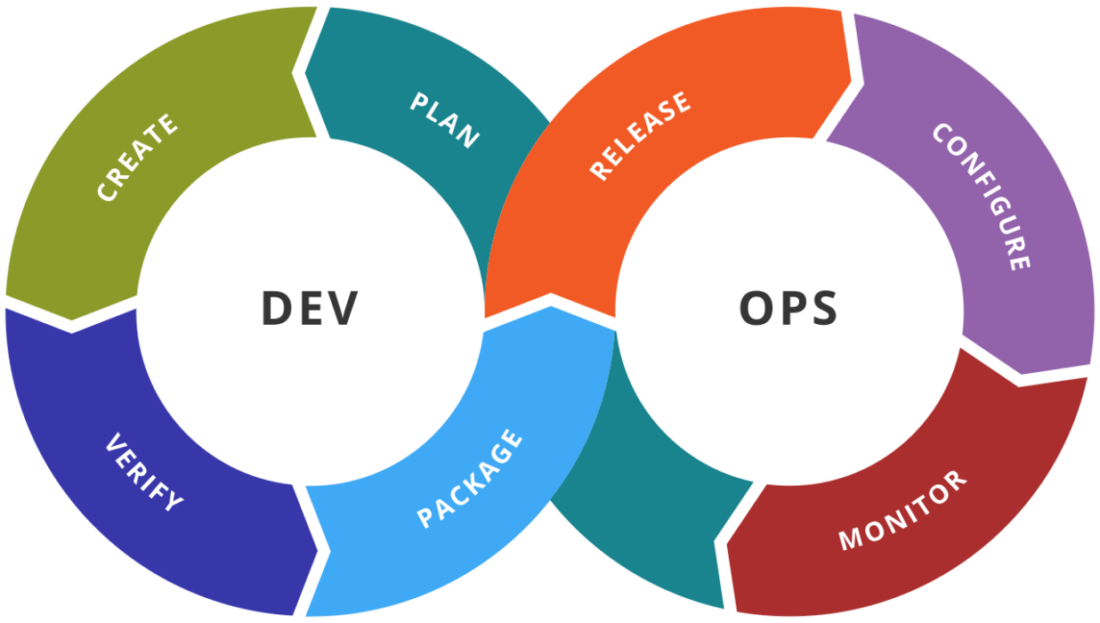

- What exactly is DevOps?

A: Given that there are no ‘definitions’ of DevOps per se, that is a very good question to start with. I like to think of DevOps as a medium that enables better communication and better collaboration between the IT operations and the development departments of any enterprise. A variety of broad philosophies, cultures, practices, and of course, software tools, have to be used together – to make high-velocity delivery of applications and services. It’s all about breaking down the traditional ‘silos’ between development and operations. A DevOps platform can be created with the help of this collaboration on one hand, and adoption of ‘agile infrastructures’ on the other. That said, agile operations and DevOps are not one and the same. I’ll say that ‘Agile’ came about as a response to, or an improvement over, waterfall methodologies. DevOps make agile practices stronger by plugging the communication requirements.

- For enterprises, how important is continuous integration, delivery and deployment?

A: Smart automation is the name of the game for modern-day businesses – and that’s precisely where these new practices come into the picture. Continuous integration is all about enabling developers to store their codes in a shared repository – bypassing the problems of one-time integration at the end of the development cycle. With CI, all modifications in the codebase are tested as and when they are made. Then, continuous delivery, or CD, is all about easing the pain points of code deployment to production. The entire procedure of software delivery is automated, ensuring that the codebase remains deployable at all times. Continuous deployment takes things a couple of notches further, by doing away with the need for an actual person to determine what to deploy. Every build that goes through the full test cycle gets deployed automatically. I look at continuous deployment as an extension of continuous delivery.

- We keep hearing a lot about ‘DevOps tools’ and ‘DevOps system’. Is there a physical DevOps product as such?

A: No, there isn’t. I’ll, in fact, go a step forward and say that this is the single-biggest point of confusion about DevOps. Just like there is nothing called an ‘agile product’, you simply cannot pinpoint something as ‘my DevOps tool’ or ‘that DevOps product’. Instead, it would be better to think of DevOps as a new-age development practice or a broad philosophy. It is, let’s say, a ‘culture revolution’ for enterprises – pushing up deployment velocity levels and and optimizing the speed of delivery of value of customers. Yet another thing that I would like to reiterate is that DevOps is completely technology-agnostic. Going a bit deeper, it would be a mistake to consider DevOps synonymous with automation tools either – although such tools are a prerequisite. Automation, on average, makes up less than 10% of the overall DevOps practices. It’s about changing up the IT culture, speeding up delivery times, revolutionizing communication and collaborative capabilities. ‘People’ and ‘Process’ are, I feel, the two important pillars of DevOps.

- What is the DevOps-As-A-Service delivery model?

A: If we talk about the newest technologies in the realm of application development, DevOps-As-A-Service model implementations would feature right near the top. Instead of chalking out and deploying the practices and syncing automation practices for CD on a standalone basis, this delivery model makes it possible to migrate all of theses processes to a hosted virtual platform…that is…the cloud. It’s like creating discrete packages of DevOps toolsets, and storing them on the cloud, and offering them ‘ -as-a-Service’ to enterprises. The DevOps-As-A-Service model eases collaboration, resolves data complexities, speeds up testing & deployment phases, and has a host of other benefits. However, security parameters and the probable risks of DevOps infrastructure outsourcing are things that we need to watch out for.

- Specialists or Generalists – what should DevOps engineers be?

A: DevOps brings down the great interdisciplinary divides between departments in a company – making it less of a ‘walled garden’ than it previously ever was. As such, the DevOps engineers need to have multidisciplinary skills – and hence, the demand is more for generalists…people who can identify challenges, form automation or software-based solutions, implement them optimally, and resolve the issues. For example, in a DevOps environment, it’s not enough for a Quality Analyst to only do testing – he or she needs to have a basic idea about development and coding as well. However, in the search for generalists, we must not lose sight of the advantages of the focused and deep knowledge levels that specialists bring with them. The typical ‘two pizza’ DevOps teams should mostly have generalists…but having a couple of specialists as well is not going to hurt.

- How is SysOps different from DevOps? Is there an overlap between the two concepts?

A: First up, I want to point out that both SysOps and DevOps are focal points of interest in cloud computing. If an enterprise is planning to ditch ‘old IT practices’ in favour of ‘cloud-based IT’, it needs to be aware about both – and determine which of the two would be best-suited for it. Unlike the strong collaboration practices that form the basis of DevOps, SysOps is powered by the Information Technology and Infrastructure Library. SysOps engineers are typically focused on server performances and making work processes more seamless. On the other hand, code, and code only, is what DevOps engineers attach prime importance to. DevOps is also, of course, far more flexible than SysOps. In a cloud environment, there are points where SysOps and DevOps processes can overlap. Ideally, the two practices or methodologies should work in tandem.

- How important is CALMS for true digital transformation?

A: DevOps, as I have already highlighted, is not a tangible product – but a culture or a philosophy that enterprises have to embrace. CALMS is an acronym, initially coined by Damon Edwards and John Willis back in 2010. It stands for Culture, Automation, Lean…as in lean or agile practices, Measurement and Sharing. Measurement, over here, refers to the monitoring of the key metrics or KPIs, including error monitoring – to ensure that DevOps is actually delivering the desired value to enterprises. Also, Sharing is all about enabling people to work together and share their work, or ideas, or experiences. For any DevOps model to be successful, each of these 5 aspects has to be followed and balanced carefully. As I love to say, for enterprise-level digital transformation to take place, it is very important to keep CALMS!

- How is DevOps adoption levels going? How excited are enterprises about DevOps?

A: See, the excitement is there. By 2017, 84% enterprises had adopted and deployed DevOps in some way or the other. Practically every present-generation tech leader or influencer is at least aware of the concept of DevOps. However, only 30% enterprises reported company-wide DevOps adoption last year. This indicates that while the adoption has certainly been WIDE enough, it has, perhaps, not been as DEEP as it should have been…till now. The amount of investments on DevOps is constantly rising. We need to take a closer look at why enterprises are not yet implementing DevOps practices on a mass scale – but I have no doubt that DevOps will be the next big thing for enterprise-level digital modernization.

- 2018 has been billed as the ‘Year Of Enterprise DevOps’. Your take on that?

A: I see it as a natural progression, to be honest. DevOps has been around for nearly a decade now – and we can safely say that it has moved on from the experimentation stage. Last year was the ‘year of the DevOps’ – and it well and truly lived up to that billing. We saw that the deploy frequency rose to 1500+ in 2017 – many, many times more than the 200-odd deployments in 2014. In a 2017 Q1 survey, it was found that 27% of the respondents have plans to implement DevOps in the next few months. I feel that by the end of this year, several big companies will start adopting DevOps at scale. Enterprise cultures are changing, there are ongoing improvements in tech automation, and the DevOps revolution is certainly gaining momentum.

- How critical are the issues of security and compliance in the context of DevOps?

A: There are myths going around about how security is not really needed for DevOps, and how configuration management tools can take care of all the security needs. It’s high time we knew the truth — and the truth is, the need for security specialists is paramount for DevOps. In fact, enterprises have to go beyond thinking about only security professionals, and focus on a culture overhaul and built-in software robustness. Automated testing modules need to be incorporated right through the development cycle, at multiple critical points. Security, audit and compliance checks are things that have to be done repeatedly and frequently – instead of considering security as a one-shot game. In a cloud computing environment, there are many sources of risks – like data thefts and loss, account hijacks, DDoS attacks, system/application vulnerabilities and the like – and without strong security parameters, the appeal of DevOps is likely to remain limited. We need to make things safer for customers.

- What is all the buzz about ‘serverless technology’? Is it helping the ‘function-as-a-service’ market to grow?

A: First of all, let me quickly point out that serverless technology is not truly ‘serverless’! Servers are still being used – but in serverless tech, the way in which applications are run changes radically from how they worked in traditional cloud computing. We can very well call serverless technology as Cloud 2.0. Apart from bringing down operational costs, squeezing the time-to-market, and bolstering the scalability levels – serverless technology helps in improving latency, and allows customers to experience a richer, better interface. Function-as-a-service, or FaaS, comes into the equation when serverless architectures are used for serverless computing. Bit by bit, serverless technology and FaaS are facilitating the removal of containers and orchestration edges. By 2021, the global FaaS market will be worth $7.72 billion.

- As DevOps is growing, a new group of ‘Site Reality Managers’ is emerging. Your thoughts?

A: This is actually rather interesting. While DevOps is all about removing the silos between operations and development, and establishing a collaborative culture – it is also giving rise to a separate new group of DevOps engineers. Given their multitasking capabilities, many enterprises offer them the position of ‘site reality managers’. Apart from having a fairly in-depth idea of the various tools and automation technologies, a DevOps engineer needs to have updated security training, a focus on collaboration, customer-orientation, and capability of automated testing. Network awareness is another must-have feature, and they need to be capable of visualizing the big picture – and how DevOps can make a difference over there. With the help of a team of DevOps site reality managers, the frequency of code deployments by an organization can go up by 30 times.

- How important is the measurement of key metrics for DevOps success?

A: This is true for almost every new technology – unless you track and monitor it, you cannot be sure about its performance. For DevOps, I cannot possibly overstate the importance of identifying key performance indicators, or KPIs, that balance speed and stability. Change volume, lead time, deployment frequency, failed deployment percentages, mean time to detection and recovery, service-level agreements and performance metrics are some of the most important DevOps metrics that need to be monitored. Continuous tracking of these metrics allows enterprises to detect bugs quickly – and sort out the glitches before delivery. If you need to understand the value of DevOps, you need to measure the metrics.

- Is there a risk of technical metrics overshadowing the business metrics of DevOps?

A: You can track a lot of metrics – but what you track is going to make a difference. Ideally, enterprises should not be planning to track more than 8-10 metrics. What’s more, each of the chosen metric should have a direct impact on the business performance. Enterprises need to understand the three main goals – quality, performance, and budget – and start monitoring DevOps metrics accordingly. Technical metrics are obviously important…but they must not block the important business metrics from view. For instance, the average user will not be interested in a temporary hardware failure, or the volume of coding done. Once again, it’s about maintaining a balance – a scenario where both technical metrics and business metrics are being tracked.

- How will DevOps make more change innovation and experimentation possible?

A: Before going into that, let me just clear the air about the term ‘innovation’ in this context. From a business perspective, innovation refers to the new capabilities to generate additional value for customers. Since DevOps adoption invariably involves a total reconfiguration of the IT value stream, it opens up the opportunities for greater experimentation…leading up to more business innovation success. The entire process will be improved, and not just the software tools. A new methodology, BizOps, can be obtained by combining the improved business collaborations with DevOps models. With the help of DevOps, code change failures can decrease by 60 times, update deployments can be 30 times faster, and failure recovery can be a whopping 165 times quicker. Technologies are changing, markets are changing…and DevOps will help enterprises stay on top of such changes, all the time.

- DevOps is increasingly putting the focus on smart automation. Will zero-touch automation be a real possibility in future?

A: Oh well, zero-touch automation is the goal…the ideal objective…for a DevOps model. To achieve that, one needs to understand carefully the 4 key tenets of DevOps – which are State, Visibility, Job Run-time and Operations. By following a systematic DevOps adoption routemap, enterprises can definitely move towards zero-touch automation – a stage where virtual resources can be called by developers to perform testing at any time. I am concerned over one thing though – as we move towards zero-touch, we are slowly giving up on the ‘human touch’…the instincts and the hunches of an experienced tester. Complete zero-touch automation with DevOps is a very real possibility – but we need to examine whether it is desirable for all cases.

- Take us through the 6Cs of the DevOps cycle.

A: This is one of the first things enterprises need to get familiar with, before considering whether to implement DevOps or not. The cycle begins with Continuous Business Planning, involving the identification of the required skills and resources. Next up is Collaborative Development, where the initial plans and programs are chalked out. The third ‘C’ in the cycle is Continuous Testing. Integration testing and unit testing are the most important over here. Then comes Continuous Release & Deployment, followed by Continuous Monitoring. The final piece in the puzzle is Customer Feedback, which is vital to learn about whether the DevOps model is indeed delivering the expected value. All the phases, or the ‘C’s, have to be managed very carefully.

- Is automated testing, or test scripts, a part of DevOps?

A: Very much so. In fact, I would go as far as saying that automated testing lies at the core of DevOps. The two biggest focal areas of DevOps are Quality and Time – and with test automation, both can be optimized. Automated test scripts help in prompt detection of bugs, unlike manual tests…where the test cycle can take up several weeks. The quality factor also gets a lift – since there are no chances of human errors creeping in. In addition, running automated tests can deliver significant cost-advantages too. The implementation of enterprise DevOps is slowly transforming traditional quality analysts to Test Automation Engineers. I’ll put it this way: if there are plenty of tests to be done manually, the chances of DevOps success go down considerably.

- Do DevOps and microservices go hand in hand? How?

A: The microservices architecture is growing in tandem with DevOps in the current enterprise environment. The two compliment each other, push up the adoption rates, and even open up newer experimentation possibilities. Since microservices are, by nature, modular – they fit easily in the DevOps platform, which is all about continuous integration, deployment and delivery. These microservices make it easy to make incremental changes to DevOps. Broadly speaking, microservices help in boosting the availability, reliability, deployability, modifiability and scalability of DevOps. Since microservices typically make the most of agile methodologies, the task of managing DevOps becomes that much simpler too. A big advantage over here is the fact that both DevOps and microservices are tool-agnostic and platform-agnostic.

- ‘Build’ or ‘Buy’ – What should be the approach towards DevOps adoption?

A: That really depends on the customer…in our case, the enterprise…and its particular needs and preferences. For starters, it has to be understood that ‘building’ something in the software domain does not necessarily mean creating something new from scratch. Now, DevOps is not maths, there is no ‘one particular correct solution’, and multiple idiosyncratic philosophies may be available as alternatives. In such a scenario – I feel that the ‘buy’ option is more appealing for enterprises – firstly because, it won’t make much sense to use up resources…manpower included…to create something that falls outside a company’s core competency. Secondly, and probably more importantly, building DevOps in-house is a time-consuming affair – and in the present age of mounting competition, hardly anyone is going to have that sort of time. It makes much more sense to purchase customizable, end-to-end enterprise DevOps toolchains from vendors. Of course, all the capabilities of such toolchains have to be tested and pre-checked.

- Is DevOps fueling an accompanying rise in container technology?

A: Very much so…and the reason for that should be self-evident. The chief underlying reason for DevOps implementation by enterprise is a huge improvement in the application delivery velocities, while maintaining high quality standards. There are, however, many enterprises who are not quite prepared for this culture change – and the container technology comes to the rescue in such situations. With the help of containers, applications previously stored on cloud networks can be redeployed easily, and without any problems. For instance, the same images, or the same Unit Test modules, can be used multiple times. As a result of this, Container-as-a-Service (CaaS) platforms are also growing in popularity. Applications with microservices functioning with containers can be delivered really quickly – and that is precisely what DevOps is all about.

- Tell something about the journey from DevOps to DevSecOps.

A: Agile methodology arrived as an improvement over the Waterfall approach. From agile, broad philosophies involving collaboration and culture changes brought us to DevOps. Now, with concerns over security mounting – the need is to move from DevOps to true DevSecOps. Technological changes and cultural changes have to be balanced and integrated in DevOps models – for the additional security assurance. Once again, the code is what requires the closest examination – and both Security as Code, and Infrastructure as Code are principles that need to be adopted. This ‘Security as Code’ is the essence of DevSecOps. Apart from bypassing the risks stemming from outdated tools in traditional CI/CD models, DevSecOps also boosts agile practices, enhances collaboration, help in bug identification, better responsiveness to changes. DevOps with embedded security, that’s what DevSecOps is.

- Is the DevOps toolchain fragmented?

A: Unfortunately, yes. If anything, fragmentation has become a major barrier in the path of widespread DevOps adoption by enterprises. The primary reason for this developer toolset fragmentation is the arrival of more and more tools for DevOps practically every quarter. This is leading to this space getting overcrowded – and selecting the best central management platform is becoming increasingly complex. Both packaged solutions and open-source solutions are available, causing further fragmentation of the DevOps toolchain. I do hope that these problems get sorted out soon enough, so that DevOps managers do not have to struggle with multiple platforms and models and tools at any single point in time.

- Is DevOps reducing complexity, or adding to it?

A: Well, this one is a double-edged sword, to be frank. There are many enterprises which feel, correctly, that they won’t be able to survive in the long-run without switching over to DevOps philosophy. For them, DevOps is a way for avoiding the increasing complexities of a digital business. However, we also need to keep in mind that DevOps – while not a tool itself – involves the usage of a large number of tools, which can potentially make things complicated. In addition, there is also the small matter of altering long-standing organizational culture standards. If not managed smartly enough, the complexities can spiral out of control pretty soon. That is a big reason why I recommend enterprises to get in touch with reliable field service software companies, and get custom DevOps models from them. After all, DevOps should make things simpler, right?

- How big is the challenge of moving away from legacy infrastructure?

A: First. I want to clarify over here that ‘legacy’ in the context of DevOps refer to both ‘legacy systems’…the outdated systems and technology…as well as ‘legacy thinking’. These are major barriers for a firm that is looking to switch to a ‘cloud-first’ methodology. The challenge lies in removing the inertia, understanding the need for application modernization, realizing that fitting DevOps in old legacy infrastructure will be like trying to fit a square peg in a round hole – causing frictions, and delivering very little value. Enterprises have to lay down detailed plans for switching over from mainframe-centric workflows to DevOps-focused workflows. It is a serious challenge…but it’s not something that cannot be overcome.

- What are the biggest barriers to DevOps growth?

A: As we just discussed, moving from a legacy system to cloud computing and DevOps is a critical task. The fact that it is difficult to get a buy-in from all the stakeholders is another point of concern, as is the general unwillingness of enterprises to experiment with new technology. Given that DevOps requires a shift in cultural values as well, existing static cultural differences also pose problems. Other important barriers include problems or negligence in monitoring, and the complexities of the architecture design. Unavailability of adequate budgets can be an issue as well, as can be not having the necessary skillset. Many companies also do not bother preparing thorough DevOps plans before the implementation stage, and that can lead to problems. Finally, we need to remember that adopting DevOps is not merely about forming just another team at office!

- Are there too many enterprise software tools at present?

A: It looks that way for sure. ‘Tool turbulence’ is a growing point of concern – and enterprises need to be wary of getting involved with too many tools, and losing their focus. It is vital to maintain a balance between under-automation and over-automation with DevOps. Businesses have to determine their precise requirements related to development, testing and deployment – and then, list out the tools that will be absolutely necessary in the DevOps model. According to me, it is important for enterprises to first identify the manual practices that are taking up a lot of time, and can be effectively automated. The DevOps model should be built around those practices, making them automated. Too many tools should never be brought in, since maintenance and monitoring will become problematic – and things will become too complex. The principle is simple enough – use only those software tools that are absolutely necessary.